In the world of software development, ensuring that your application works as intended is crucial. This is where testing comes into play, serving as a safeguard against unexpected behavior and bugs. But how do you test effectively? This is where concepts like mocking, stubbing, and contract testing become vital. Let’s dive into these techniques and see how they can enhance your testing strategy.

- The Essence of Mocking and Stubbing

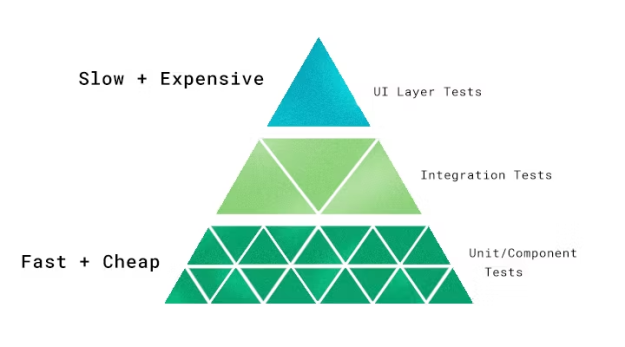

Mocking and stubbing are techniques used primarily in unit and component tests, but their usefulness extends beyond these. They are about creating fake versions of external or internal services to streamline and stabilize testing processes.

Mocking refers to creating a faux version of an external or internal service to replace the real one during testing. This is especially useful when your code interacts with object properties rather than behaviors. By mocking dependencies, you enable your tests to run more quickly and reliably since they are not bogged down by real-time data fetching or complex logic processing.

On the other hand, stubbing involves creating a stand-in for certain behaviors of an object rather than the entire object. This technique is useful when your implementation interacts only with specific behaviors of an object, enabling faster and more focused tests.

- Practical Applications

When your code uses external dependencies, such as system calls or database access, mocking or stubbing comes in handy. For example, instead of actually creating or deleting a file during a test, you can mock or stub the file system’s responses. This not only speeds up the testing process but also ensures that your tests remain independent and easy to manage.

- Contract Testing in Microservices

In a micro-services architecture, services interact based on predefined “contracts” detailing expected requests and responses. Contract testing verifies that these interactions meet the agreed-upon standards. Unlike traditional integration testing, contract testing focuses on the interfaces between services, making it a leaner and more targeted approach.

This type of testing is beneficial for checking the integrity and reliability of service interactions without deploying an entire system. It’s particularly effective in continuous integration pipelines, as it ensures that changes in one service don’t break the contract with another.

- The Role of Mocks and Stubs

In contract testing, mocks and stubs play a crucial role. By simulating the services that an application interacts with, developers can check the consistency and reliability of the application’s responses without relying on live services. This approach significantly reduces testing time and increases reliability.

- Conclusion

Testing is an integral part of the software development lifecycle, and techniques like mocking, stubbing, and contract testing are essential tools in a developer’s arsenal. By understanding and implementing these strategies, we can ensure that your tests are both efficient and effective, leading to more reliable and maintainable software.

The link to the initial blog post: https://circleci.com/blog/how-to-test-software-part-i-mocking-stubbing-and-contract-testing/

From the blog CS@Worcester – Coding by asejdi and used with permission of the author. All other rights reserved by the author.